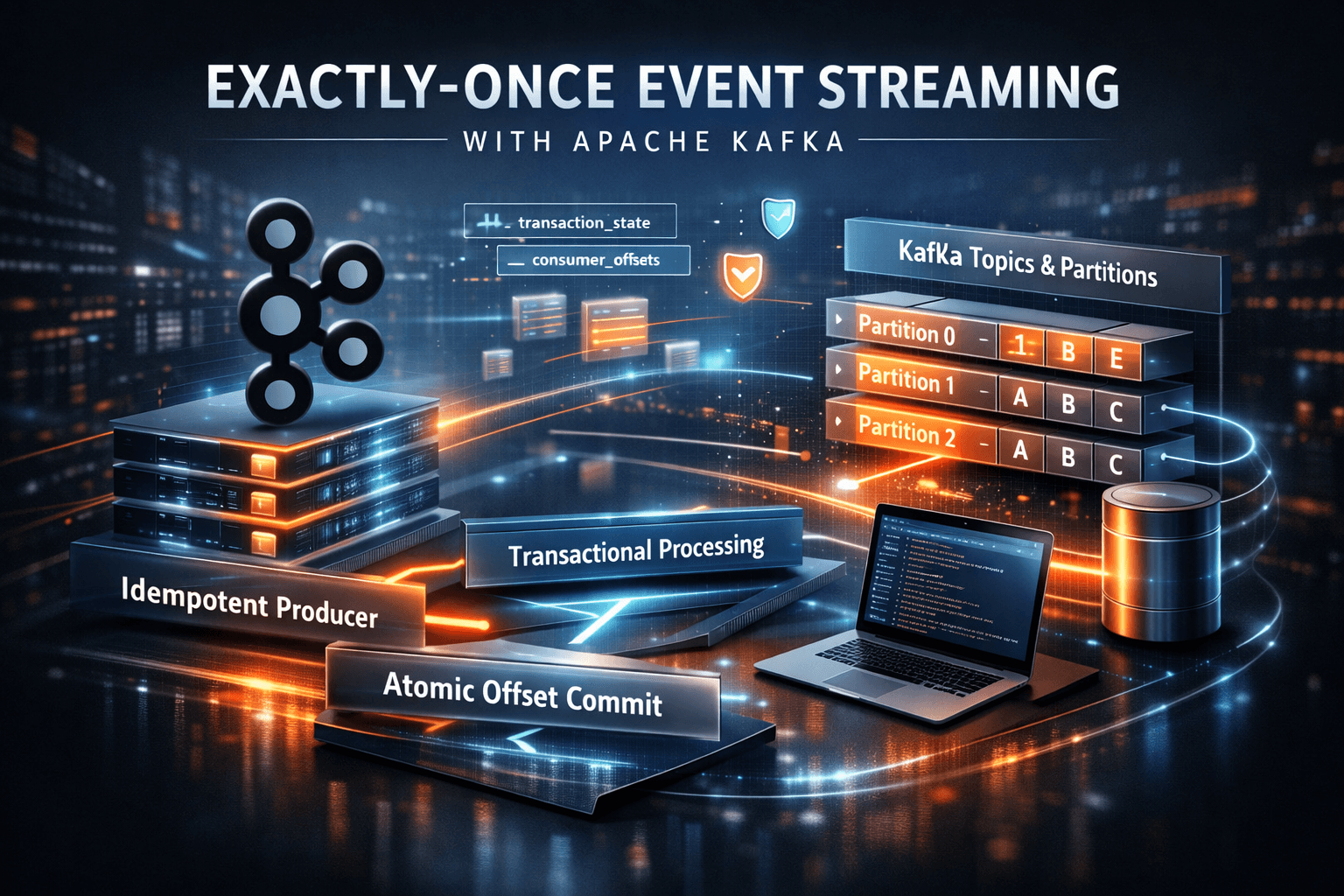

Exactly-Once Event Streaming with Apache Kafka

Event-driven architectures have become the backbone of modern distributed systems. Platforms handling financial transactions, telemetry pipelines, or real-time analytics cannot tolerate duplicate events or inconsistent processing states.

Apache Kafka provides exactly-once semantics (EOS), allowing developers to build pipelines where each record is processed once and only once — even in the presence of failures.

Achieving this in distributed systems is extremely difficult due to:

- Network partitions

- Process crashes

- Retry mechanisms

- Concurrent consumers

- Distributed commit coordination

Kafka solves these challenges through a combination of idempotent producers, transactional messaging, and atomic offset commits.

This article examines the underlying mechanisms and architectural implications.

The Core Problem: Duplicate Processing

In distributed pipelines, retries are unavoidable.

Consider a producer sending an event:

Producer → Broker → Consumer

If a producer sends a message and crashes before receiving an acknowledgment, it will retry.

The broker may already have written the record to the log. Without safeguards, retries create duplicates.

Traditional messaging systems rely on at-least-once delivery, leaving deduplication to application logic.

Kafka EOS moves this guarantee into the infrastructure layer.

Kafka Log Architecture

Kafka is fundamentally a distributed append-only commit log.

Each topic is divided into partitions.

Topic ├── Partition 0 ├── Partition 1 └── Partition 2

Each partition is an ordered immutable sequence of records stored on disk.

offset: 0 -> event A offset: 1 -> event B offset: 2 -> event C

Consumers track progress through offsets.

However, storing offsets separately from message processing creates a failure window.

Example failure scenario:

- Consumer processes event

- Consumer crashes before committing offset

- On restart, event is processed again

Kafka EOS solves this through transactional offset commits.

Idempotent Producers

Idempotence ensures that retries do not introduce duplicate records.

When enabled, Kafka assigns a Producer ID (PID) and attaches a sequence number to every record.

Producer ID: 1923 Sequence Numbers: 0,1,2,3...

The broker stores the latest sequence number per producer per partition.

If a retry arrives with an already seen sequence number, it is discarded.

Configuration:

Properties props = new Properties(); props.put("enable.idempotence", "true"); props.put("acks", "all"); props.put("retries", Integer.MAX_VALUE); props.put("max.in.flight.requests.per.connection", 5); KafkaProducer<String, String> producer = new KafkaProducer<>(props);

Key guarantees:

- No duplicate writes during retries

- Ordering preserved within a partition

- Broker-level deduplication

Transactional Messaging

Idempotence alone is insufficient for multi-partition writes or read-process-write pipelines.

Kafka transactions extend the model by allowing multiple operations to be committed atomically.

A transaction can include:

- Producing records to multiple partitions

- Writing records to multiple topics

- Committing consumer offsets

All operations either succeed together or abort together.

Transaction Lifecycle

Transactions are coordinated by a transaction coordinator inside Kafka brokers.

Steps:

- Initialize transaction

- Produce records

- Send offsets

- Commit transaction

Example:

KafkaProducer<String, String> producer = new KafkaProducer<>(props); producer.initTransactions(); try { producer.beginTransaction(); producer.send(new ProducerRecord<>("payments", "txn-1")); producer.sendOffsetsToTransaction(offsets, consumerGroupId); producer.commitTransaction(); } catch (Exception e) { producer.abortTransaction(); }

If the producer crashes before commit, the coordinator marks the transaction as aborted.

Consumers configured with isolation.level=read_committed will ignore aborted records.

Exactly-Once Stream Processing

Kafka Streams integrates these primitives to provide end-to-end exactly-once pipelines.

Pipeline example:

Input Topic → Stream Processor → Output Topic

Without EOS:

- Event processed twice

- Duplicate downstream state updates

With EOS:

- Input offset commit

- State update

- Output event publish

All executed within one transaction.

Internal Metadata Topics

Kafka internally manages several topics for EOS.

Important ones include:

__transaction_state

Stores transaction metadata.

transactional.id producerId state

States include:

- Ongoing

- Prepare Commit

- Complete Commit

- Aborted

__consumer_offsets

Stores committed consumer offsets.

Offsets written within transactions guarantee that message processing and offset advancement occur atomically.

Failure Scenarios and Recovery

Kafka EOS handles several critical failure modes.

Producer Crash

Coordinator detects timeout.

Transaction → aborted.

Consumers never see partial results.

Broker Failure

Kafka replicates partitions across brokers.

Leader election occurs.

Sequence numbers ensure idempotent recovery.

Network Partition

Producers retry.

Duplicate messages rejected via sequence validation.

Performance Implications

Exactly-once guarantees introduce overhead.

Major sources include:

- Transaction coordination

- Additional network round trips

- Metadata tracking

However, modern Kafka clusters maintain high throughput.

Benchmarks commonly exceed:

1 million messages/sec per cluster

with transactional workloads when tuned correctly.

Important tuning parameters:

transaction.timeout.ms batch.size linger.ms compression.type

Architectural Pattern: Event Sourcing with Kafka

Exactly-once semantics make Kafka suitable for event sourcing systems.

Architecture:

Command Service ↓ Kafka Topic (Events) ↓ Materialized Views ↓ Query Services

Benefits:

- Immutable event log

- Deterministic state reconstruction

- Time travel debugging

- Horizontal scalability

Real-World Applications

Kafka EOS is widely used in:

Financial Systems

Payment processors must prevent double charges.

EOS ensures transaction events are processed exactly once.

Data Warehousing

Streaming ETL pipelines rely on deterministic ingestion.

Kafka → Spark/Flink → Warehouse

Duplicate records corrupt analytics pipelines.

Fraud Detection

Real-time pipelines evaluate events against ML models.

Duplicate signals could trigger incorrect fraud alerts.

When Not to Use Exactly-Once

EOS is powerful but not always necessary.

Avoid when:

- Pipeline tolerates duplicates

- Low latency is more important

- System complexity must remain minimal

In many cases, idempotent consumers are sufficient.

Conclusion

Exactly-once semantics in Kafka represent a major advancement in distributed data processing.

By combining:

- Idempotent producers

- Transactional messaging

- Atomic offset commits

Kafka enables deterministic event pipelines at massive scale.

For teams building financial systems, analytics pipelines, or real-time microservices, these guarantees transform Kafka from a simple messaging system into a distributed data backbone capable of strict consistency under failure conditions.

Understanding these mechanisms allows engineers to design resilient streaming architectures capable of processing billions of events reliably across distributed infrastructure.